One Nav at a Time: How We Stopped Feeding the Legacy and Started Replacing It

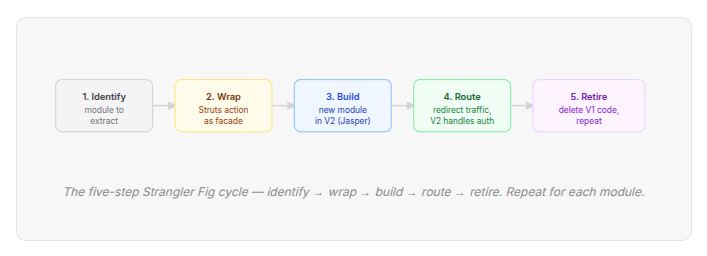

In software engineering, the most dangerous word is “rewrite.” Complete rewrites are high-risk, expensive, and often fail to deliver value until the very end. In our case, we chose a little different path: the Strangler Fig Pattern — a methodical approach to building a modern system around the edges of a legacy monolith until the new architecture eventually becomes the host.

🌱 What Is the Strangler Fig Pattern?

The name comes from the strangler fig tree — a plant that germinates in the canopy of a host tree and slowly grows downward, wrapping around the trunk until it becomes a self-supporting structure. The host tree doesn’t get cut down. It simply becomes less and less essential as the fig takes over, until one day it’s hollow and the fig is the only thing left standing.

Applied to software, the pattern works like this: you don’t rewrite the legacy system — you build alongside it. New features go into the new system. Old modules get migrated one at a time, with a routing layer directing traffic to whichever implementation is live for each surface. The legacy codebase shrinks with every migration. The new system grows. At no point is the old system taken offline mid-flight, and at no point does the business have to wait for a complete rewrite to ship. The risk is distributed across months of incremental work instead of concentrated in a single cutover event.

🔨 The “Whack-a-Mole” Reality

Before this migration, our legacy CX system was a massive Java Struts monolith with over 1,000 files and a hand-rolled JDBC layer. It lacked an ORM — every query was hand-mapped SQL with no type safety and no enforced schema ownership.

We lived in a “Whack-a-Mole” culture. — fix the reminder logic in Send Flow, watch the Analytics counts drift. Tighten a validation rule in Admin Setup, and a Deploy Survey action you’d never heard of starts throwing NullPointerException in production because it had silently depended on the looser behaviour for years. We weren’t slow because we were careless. We were slow because the system punished speed.

⚡ The Catalyst: Centralised Root Cause Module

Sometimes back, we hit a turning point. We needed to build a new feature: Centralized Root Cause (CRC) — a complex churn-risk analyser. CRC wasn’t uniquely complex — we’d built harder things in the monolith before. The feature was a natural seam: a new surface, no legacy entanglement, a clean API boundary. We used it as the forcing function to stop feeding the monolith and start replacing it. Adding them to the monolith was a recipe for disaster and more cleanups later.

Instead, we chose to break this pattern and launched a new repository - Version2 — with a new tech stack and a strategic architectural hard stop:

The Nav-Bar Mandate If a new feature requires a navigation bar, it must live in the new tech stack (NestJS + React) in the Version2 repo. No new nav bars are to be added in legacy code. This single rule became our architectural enforcement mechanism.

🏗️ Phase 1 — Wrapping the Legacy

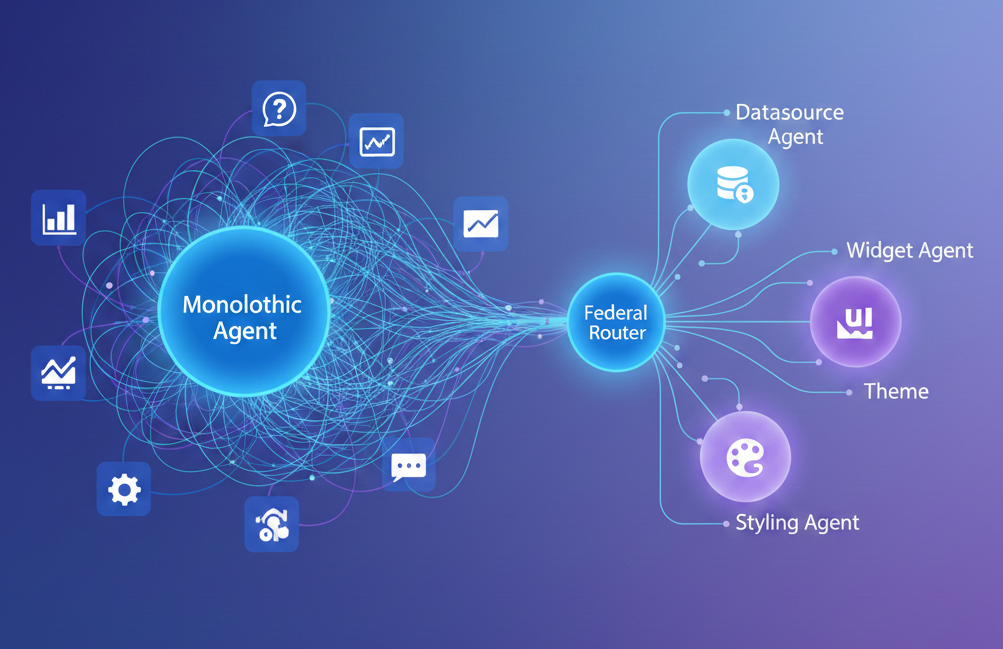

We didn’t pivot to a complex microservices mesh. Instead, we adopted an App-Service architecture — isolating specific business domains into focused, manageable services that communicate clearly while sharing necessary infrastructure.

The Strangler Fig works by wrapping the old system. We used our Navigation Bar as the routing layer. To the user, the experience is seamless. Behind the scenes, we route traffic between V1 (the legacy monolith) and V2 (Version2). When a module has migrated, the corresponding Struts action redirects the request to Version2’s API. Version2 then handles authentication and authorisation independently — validating the session, verifying permissions, and serving the response.

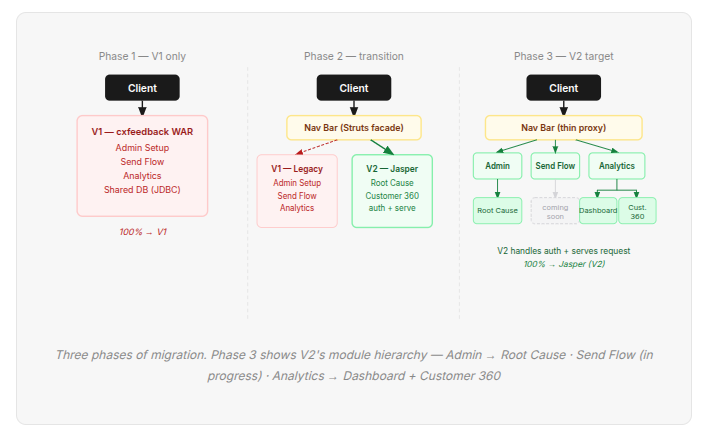

The Three Phases

Phase 1 — V1 only The starting state. Every client request goes directly to the legacy system — no routing layer, no abstraction. Admin Setup, Send Flow, and Analytics all live inside the same deployable, sharing the same JDBC layer and the same database. There is no seam to exploit. The monolith is the entire system.

Phase 2 — Transition This is where the Strangler Fig actually begins. A Nav Bar built on the Struts facade is introduced as the routing layer — the single seam between legacy and new. The client never talks to V1 or V2 directly; it talks to the nav bar, which decides where to send the request. V1 continues running unchanged — Admin Setup, Send Flow, and Analytics still live in the legacy WAR and handle their existing traffic. Meanwhile, V2 comes online alongside it, initially serving only the new modules: Root Cause, Customer 360. The two systems coexist in production. This is intentional, not temporary — Phase 2 is the stable operating state for the bulk of the migration.

Phase 3 — V2 Target The end state isn’t fully realised yet — this is where we’re headed. The nav bar thins from a Struts facade into a lightweight proxy, its only job being to route requests to the right Version2 module. V1 is still running, but its surface area keeps shrinking. The work currently in flight: moving Deploy and Dashboard out of Analytics in the legacy WAR and into their own modules in Version2. Once those land, the module hierarchy in V2 reflects how the product actually works — Root Cause, Dashboard and Deploy as independent modules. The monolith doesn’t disappear in a single moment. It just runs out of things to do.

⚙️ The Technical Standard

Moving to a new stack wasn’t just a language change — it was a shift to a modern service standard that made the failure modes of the old system structurally impossible.

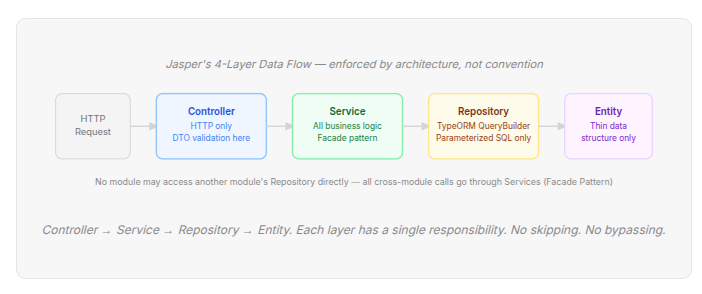

The 4-Layer Data Flow

No module may access another module’s Repository directly. All cross-module calls go through Services (Facade Pattern).

What this means in practice

- 4-Layer Data Flow:

Controller → Service → Repository → Entity. Controllers handle HTTP only. All business logic lives in Services. All DB queries use parameterized TypeORM QueryBuilder — no raw SQL, no hand-mapped JDBC. - Facade Pattern: No module may access another module’s repository directly. Cross-module interaction goes exclusively through the exposed Service. This is the structural fix for the Whack-a-Mole problem.

- DTO Validation: All inbound payloads validated using

class-validatorat every entry point. Magic string bugs and unvalidated inputs never reach business logic.

📊 A Tale of Two Eras

| Activity | V1 — The Monolithic Era | V2 — The App-Service Era |

|---|---|---|

| Finding a bug | Deep-sea diving in “Spaghetti” 🍝 — trace spans 3 modules, 1 log stream, no IDs | Surgical logging within a specific service 🎯 — structured logs, trace IDs, Prometheus metrics |

| Adding a feature | High risk of breaking unrelated pipelines 🙏 — shared tables, no ownership | A localised update to a dedicated domain ✉️ — module owns its schema and CI/CD |

| Documentation | Deciphering code comments from 2015 📜 — no contracts, no structure | Automated OpenAPI/Swagger docs 📖 — generated from DTOs and decorators |

| Build velocity | Monthly “Big Bang” sprints 🗓️ — one WAR, everything or nothing | Fast, continuous builds 🚀 — each service deploys independently |

| Testing | Deploy and pray — production was the test environment | 100% E2E via Testcontainers (real MySQL + Redis) — confidence before every deploy |

🚀 Impact: Speed, Stability, and Sanity

By adopting this pattern, we didn’t just clean up our code — we unlocked the business.

⚡ Velocity From monthly “Big Bang” releases to fast, continuous builds. Each service ships on its own schedule.

🛡️ Reliability With 100% E2E coverage using Testcontainers, we no longer “deploy and pray.” Real MySQL + Redis in every test run.

📊 Customer360 Built a high-performance churn analysis tool in record time — proving the new architecture handles complex data aggregation far faster than the monolith ever could.

🔭 What We’ve Shipped — and What’s Next

✅ Already shipped in V2

- Centralized Root Cause (CRC) — greenfield module, zero legacy dependency. New tables, new NestJS module, new React frontend. V1 untouched.

- Customer360 — AI-powered churn risk analysis across all workspace surveys. Aggregated NPS scoring, top 10 root causes, per-customer severity and action items. Shares some V1 data inputs during transition, fully owned by V2.

🔄 Currently in progress

- Deploy (Send flow) — moving from a fragile, rigid V1 design to a reliable, independent V2 service. Deep dependency on shared campaign infrastructure being wrapped behind clean interfaces.

- Analytics Dashboard — swapping old UX and slow queries for a modern React frontend and optimised NestJS backend to deliver insights in milliseconds.

🗺️ Strategic Comparison

| Category | V1 — The Legacy Monolith | V2 — The App-Service Future |

|---|---|---|

| Architecture | Monolithic / Opaque — 1,000+ Java files, shared tables, no enforced boundaries | Decoupled App-Services — domain modules, strict layering, Facade pattern enforced |

| Development | “Whack-a-Mole” debugging — fix one thing, break another | Predictable & tested — surgical changes, 100% E2E API coverage |

| UX / Performance | Slow queries on live tables · Old JSP/React 15 UI | Modern React · Optimized NestJS queries · Real-time analytics |

| Stack | Struts · Ant · hand-rolled JDBC (V1) | NestJS · TypeScript · TypeORM · Turborepo (V2) |

💡 The Lesson

The most important thing we learned is that you don’t fix a legacy system by refactoring it forever. You fix it by building a better future right next to it. This approach has moved us from a system that limits our potential to one that empowers it.

One nav tab at a time. That’s how you strangle a monolith without breaking what’s already working.