- Add widgets

- Survey Comparison

- Heatmap

- Anonymity Settings

- eNPS in Workforce Analytics Portal

- Notes Widget

- Text Analysis Widget

- Response rate

- Text Report

- Trend analysis widget

- Show/hide Markers and Building blocks

- Question Filter

- Single Question Widget

- Heatmap default

- Sentiment analysis

- Scorecard

- Driver analysis

- Scorecard: All options view

- Heatmap Custom Columns

- 360 feedback introductory text

- 360 feedback display configurations

- 360 feedback display labels

- Multi Level Priority Logic

- 360 Surveys- Priority Model

- 360 feedback - skip logic

- 360 feedback - show hide question logic

- 360 Survey Settings

- 360 feedback configuration

- Customize the validation text

- 360 Survey design

- 360-Reorder section

- 360 Section Type - Single Select Section

- 360 Delete Sections

- 360 Add Sections

- 360 section type - Free responses text section

- 360 Section Type - Presentations text section

- 360 Section-Edit Configurations

- 360 Survey- Languages

- Matrix section

AI-Powered Content Moderation in Conversations

AI-Powered Content Moderation is a proactive safety feature integrated into the Conversations module, designed to maintain a secure and professional environment for employee feedback. By leveraging advanced machine learning, the system automatically detects and intercepts harmful content—such as hate speech, personal threats, sexually explicit material, or doxxing in real-time. This eliminates the need for constant, manual monitoring by moderators.

Watch this quick walkthrough to understand how it works:

Click to download video

The system operates on an asynchronous "Optimistic UI" architecture. When a participant submits a response, the platform displays it instantly to the author to ensure a fluid experience. Simultaneously, the content undergoes a background AI scan. If the content is flagged as a high-severity violation, the system automatically keeps the response hidden from all other participants. This architecture ensures that harmful content is never publicly visible and maintains the forum's integrity, while maintaining it's social nature.

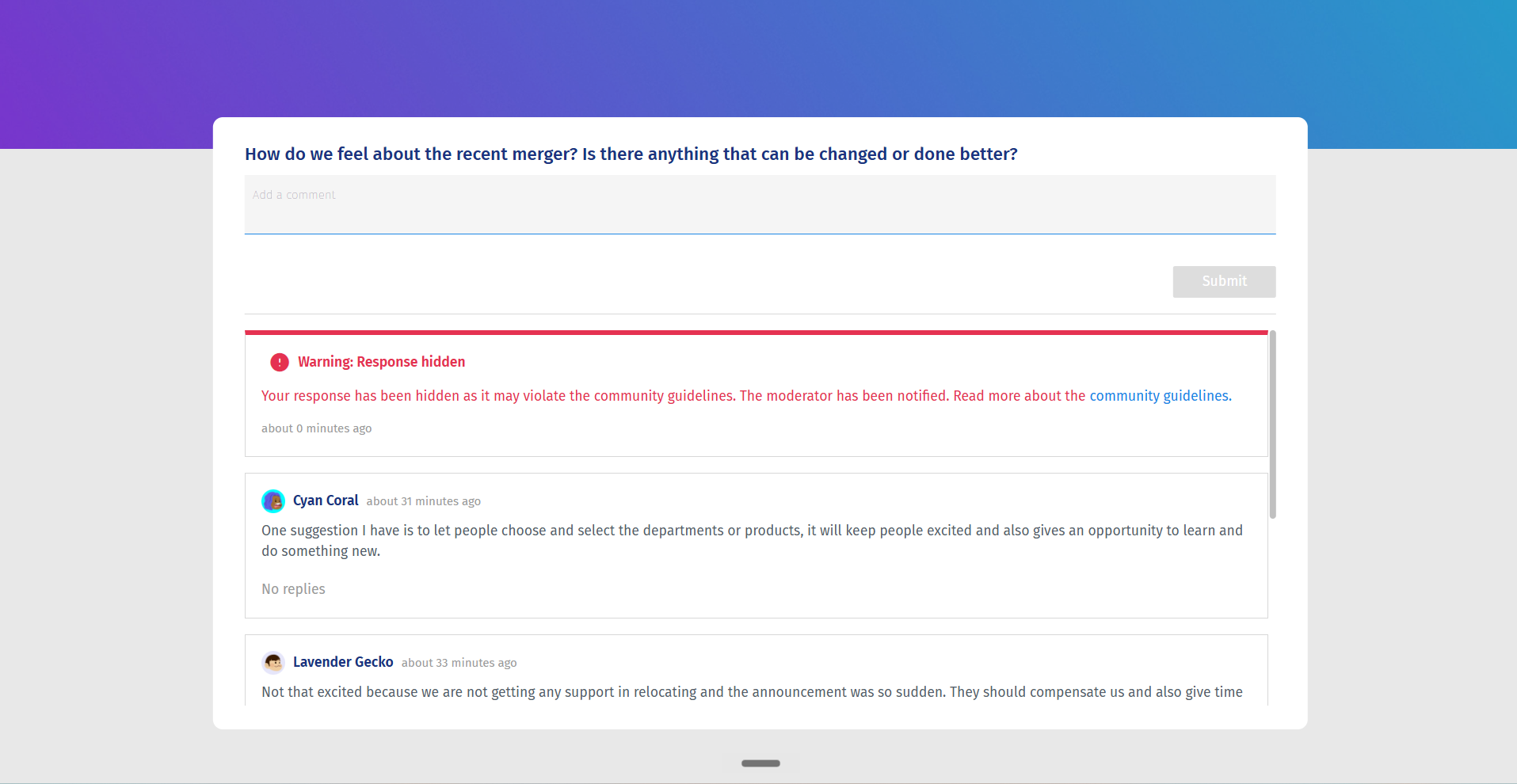

Transparency and safety are foundational to the participant experience. If your content triggers a moderation flag, the system provides clear feedback regarding your status:

- Immediate Feedback Loop: Upon clicking submit, your response is immediately visible in your view, maintaining the spontaneity of the conversation.

- Automatic Content Hiding: If your response is flagged by the AI for violating community guidelines, it remains hidden from all other participants to protect the community.

- Inline Warnings and Guidance: If your post is hidden, you will see an inline warning message. This notification explains that your post has been restricted and provides a direct link to the tool’s Community Guidelines, fostering an educational approach to workplace ethics.

- Participant Restrictions: In cases of persistent violations, a moderator may restrict your account from this specific conversation. If you are restricted, you will be unable to post new responses; your input interface will be disabled, though you retain the ability to read and view existing discussions. A clear error message will notify you of your restricted status.

As a moderator, you have access to a robust suite of tools designed to manage forum integrity with minimal effort. The workflow centers on proactive alerts and centralized dashboard management.

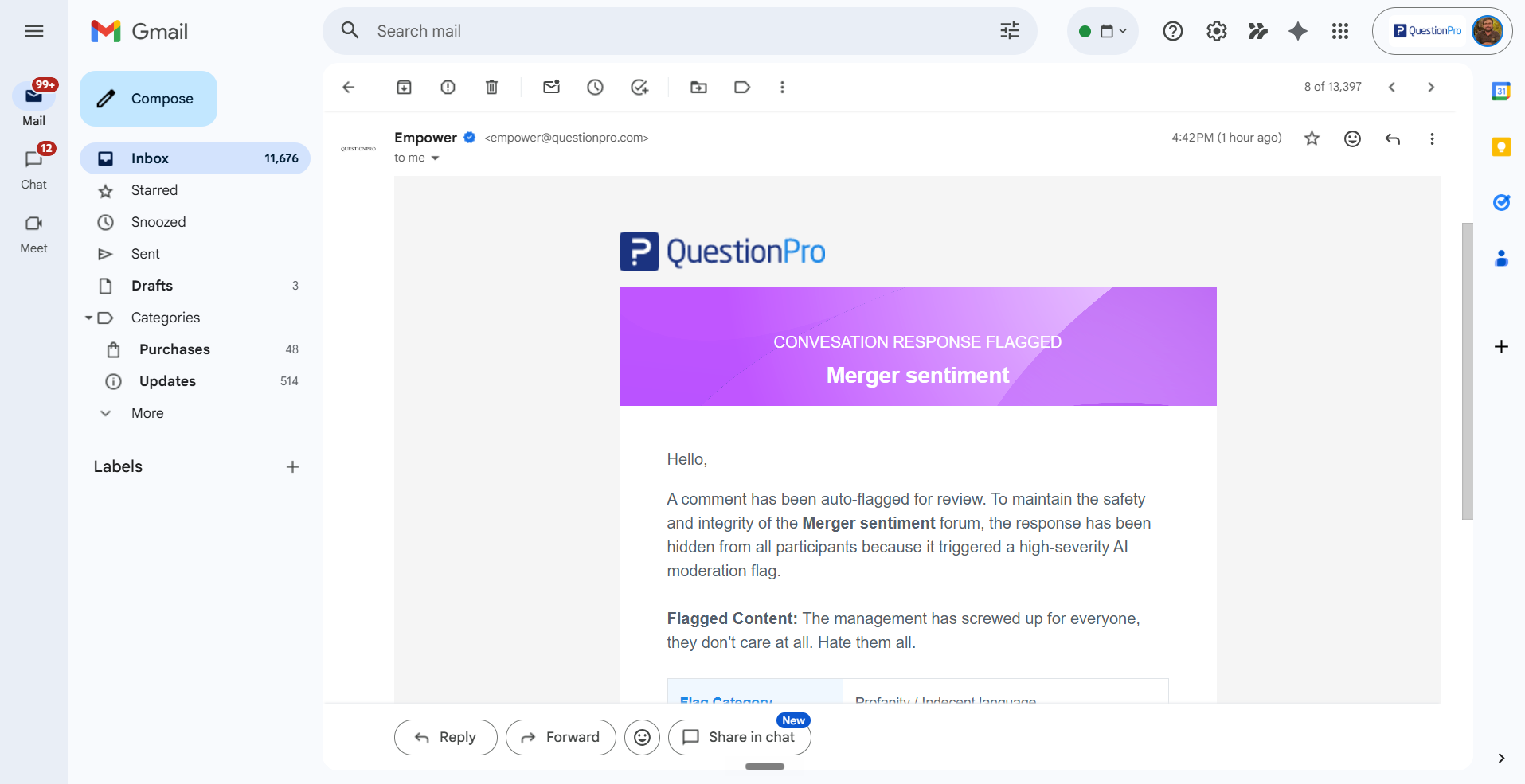

- Real-Time Transactional Alerts: When the AI identifies a high-severity violation, you receive an automated email notification. This alert includes the full text of the flagged comment, the participant’s anonymized ID, the category of the violation (e.g., Hate Speech, Threats), and the system’s confidence score.

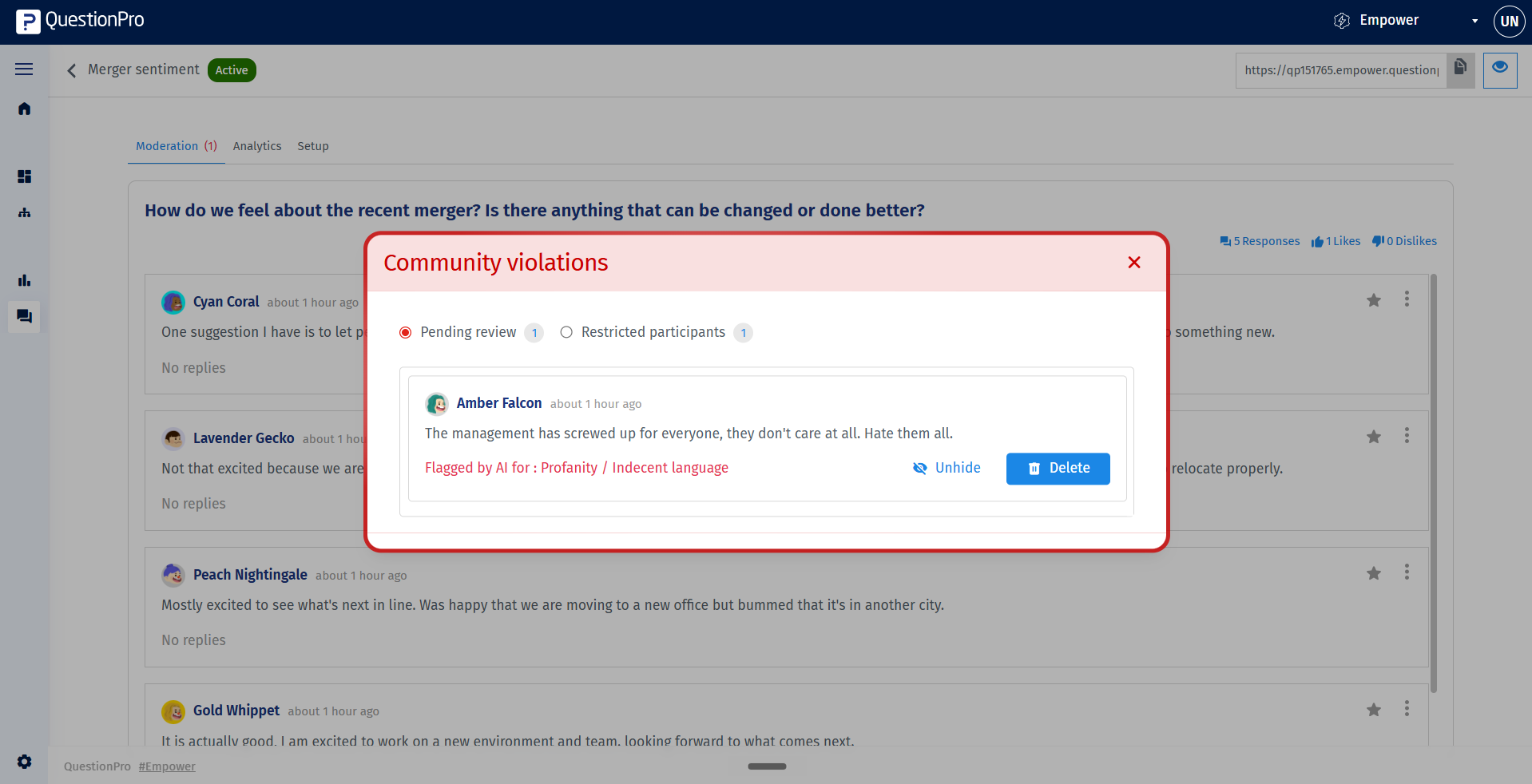

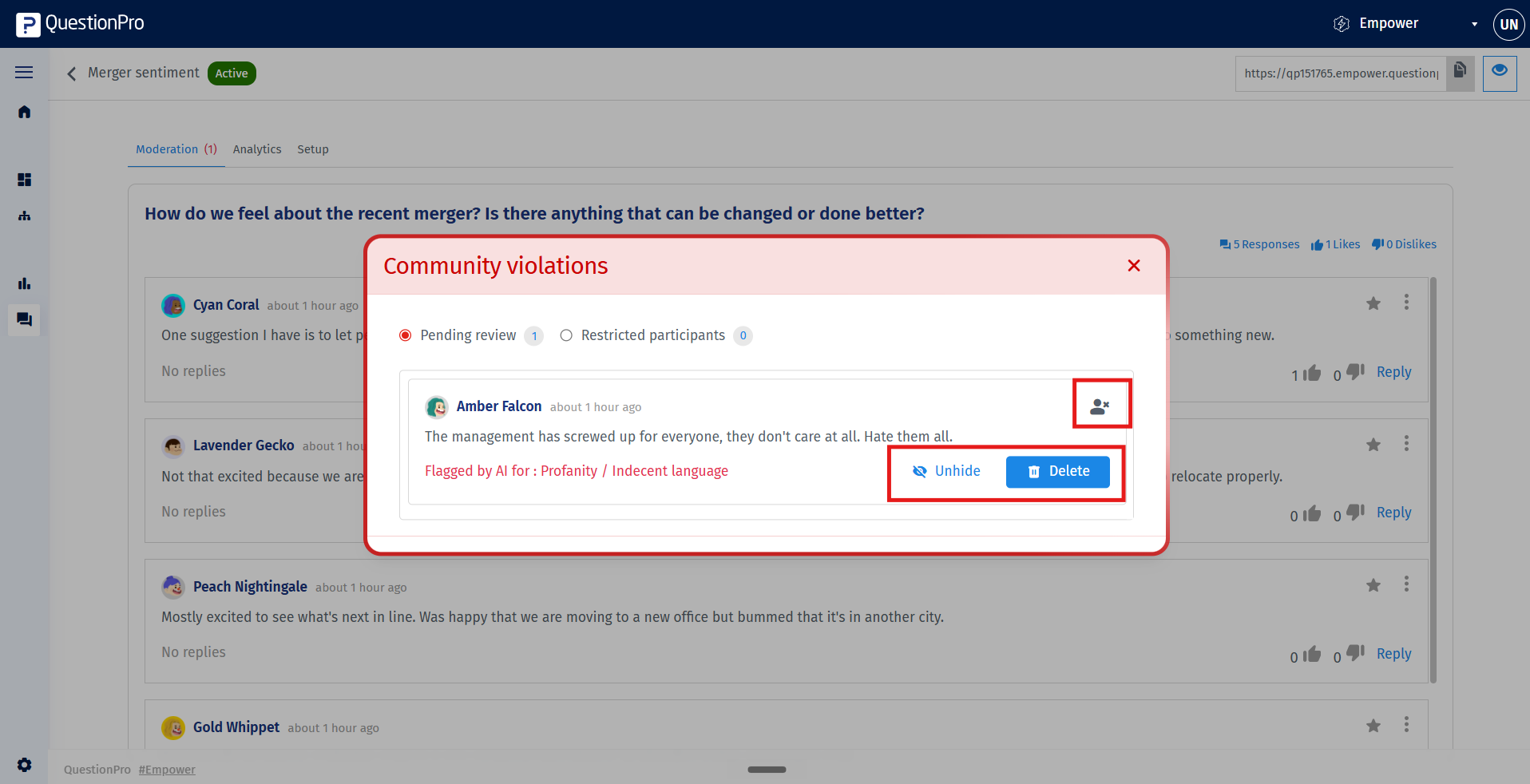

- Restricted Responses view: Access the centralized list of flagged responses by clicking the link provided in your email alert, or navigate to the Community Violation modal within your Empower conversation. Here, you can review a queue of all flagged activities.

- Moderation Actions: You can perform three distinct actions on any flagged item:

- Unhide: If you determine the flag was a false positive, selecting this option restores the comment to the forum and immediately lifts the visibility restriction for all participants.

- Delete: This permanently removes the response from the conversation. Note that the warning previously displayed to the author will remain until they refresh their browser session.

- Restrict Participant: This action blocks the individual from submitting further contributions to the conversation. This is an effective measure for participants who persist in violating community guidelines, resulting in an error message whenever they attempt to interact with the forum.