You’re not the kind of leader who ignores what’s happening on the floor. You run engagement surveys. You care about culture. And at some point, you’ve probably thought: “I wish my employees had a place to talk openly, not just in a survey, but in real time.”

So you open up Conversations, an internal forum where your team can share ideas, raise concerns, and feel genuinely heard. It’s a great move. But the moment you give everyone a microphone, a question follows: what if someone uses it the wrong way?

It probably won’t be most of your employees. But it only takes one hostile comment, one personal attack, or one piece of harmful content to make others feel unsafe and to make you question whether the forum was worth opening at all.

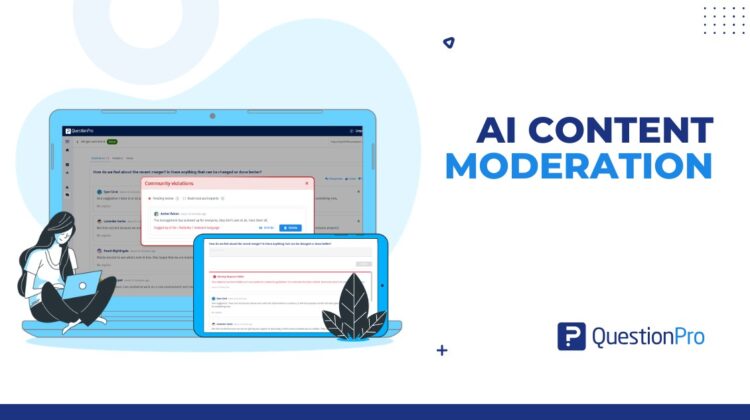

That’s exactly why we built AI Content Moderation into the Empower module so you never have to choose between giving your people a voice and keeping your culture safe.

The Real Risk Isn’t a Toxic Culture. It’s a Momentary Lapse.

Most HR leaders who hesitate to launch an open forum aren’t worried about systemic toxicity they’re worried about edge cases. The frustrated employee who has a bad week and posts something they’ll regret. The rare bad actor who sees anonymity as a loophole. The comment that goes up at 6 PM on a Friday and sits there, visible to your entire global team, until someone notices it Monday morning.

These situations are uncommon but their impact isn’t. Harmful content that lingers even for a few hours can erode the very trust you’re trying to build. Our AI Moderation closes that window entirely, around the clock, so you’re never caught off guard.

Protect the Forum Without Killing the Energy

The worst version of moderation is one your employees can feel. A “pending approval” queue signals distrust; it tells people their voice is on probation before anyone’s even read it. That kills participation fast.

Our approach uses an Optimistic UI architecture that makes safety invisible to well-intentioned participants:

- The author sees their post go live instantly. No friction, no waiting.

- Everyone else only sees the post once it passes the AI’s background scan.

- The result: a forum that feels open and real, with safety built in at the infrastructure level.

You Stay in Control. Always.

AI moves fast, but it doesn’t make judgment calls — you do. When the system flags a high-severity violation (hate speech, personal threats, explicit content, or doxxing), you’re notified immediately with the full context: the flagged text, the participant’s anonymized ID, the category of the violation, and a confidence score.

From a single dashboard, you can:

- Unhide the post if the AI got it wrong – sarcasm, context, and nuance happen, and your judgment wins.

- Delete content that genuinely violates your community guidelines.

- Restrict a participant from posting further without removing them from the conversation entirely. They can still read and observe, just not post.

The Forum Your Employees Deserve

The best reason to open a Conversations forum is simple: your employees have things worth saying, and hearing them makes your organization better. The risk of a few bad actors shouldn’t be the reason that never happens.

With AI Content Moderation, you can launch with confidence. The forum stays open, the engagement stays high, and the rare moment of harmful content is handled before anyone else even sees it.

AI Content Moderation is now included as a standard enhancement in the Empower module. No add-on required.

See how AI Moderation works — and open the forum your team has been waiting for.