Whilst every discussion amongst social and survey researchers is sure to reference representative sampling and random probability sampling theory, the cognitive stress of a survey on respondents is often overlooked. Stated plainly: data quality is directly proportional to comprehension and usability.

This concludes the technical portion of this post.

But the issue remains: if your survey is not effective, it will have a negative impact on the findings. In other words, garbage in, garbage out.

Questionable data quality isn’t the only risk, either. If, as data is being gathered, you find that the survey needs to be updated to have better questions, you also run the risk of respondent fatigue, confusion, sorting through the responses (which likely had some good information mixed in with the poor information), and possibly needing to start the entire research process over, which inevitaly lead to increased research costs.

Also not good.

There is also the scenario of running a survey, getting the results, finding that the survey didn’t meet the intended purpose, and the results aren’t worth anything in the end. That leaves the question: do we start completely over with a new survey, new group? Nobody likes realizing they wasted budget. Even worse is realizing that, had the survey just been tested first, chances are the changes could have been identified and submitted early enough to avoid the lost time and resources.

Testing the survey prior to circulation

The solution, then, is to thoroughly, objectively test the survey before it’s distributed. Two of the most common methods:

- peer/subordinate/supervisory review

- soliciting test responses from Facebook friends.

One might argue that neither of these methods would squarely be considered thorough or objective, and we could still end up with, well, garbage. Albeit slightly less stinky.

Introducing TryMyUI

We’ve mentioned TryMyUI before, and now a new integration makes it incredibly easy to submit stress/usability tests for fast, effective, objective feedback on your surveys, before they are published.

Here’s how QuestionPro’s integration with TryMyUI works:

Step 1: Create your survey

The first step is to create your QuestionPro survey as you normally would, copyedit it, get some internal reviews, ask Facebook friends, etc.

Step 2: Enter your scenario

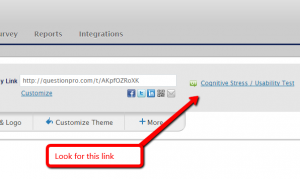

From the Edit Survey tab just next to the Live Survey Link for your survey, click the link that says “Cognitive Stress / Usability Test.”

A sample scenario is provided, which you may edit as appropriate to clarify what you are looking for from the testers. Do your best to outline exactly what the scenario is you are trying to test for – be specific. The more information you give the testers, the better the test results (“you just purchased a luxury vehicle that you love, though you’re still a bit nervous about the price you paid, even though you had a great experience going through the purchase process itself, and you’ve been asked to complete a survey about your purchase experience” versus “you’re being asked to complete a survey based on a recent purchase”).

Step 3: Confirm your order

Once you have adjusted the scenario, you can confirm your payment information and place your order.

Here’s what you’ll get with your usability test

Once your tests are complete (typically within a few hours), you will receive an email with the following:

- A video detailing the experience each tester had from initiating the test to completion based on the scenario you provided.

- The answers to four standardized questions the experts at TryMyUI have designed to provide critical feedback you can use to ease the cognitive stress your survey may have on respondents.

Now, there’s only one thing left for you to do: Go test your next survey!

References & Further Reading:

Bureau of Labor Statistics & TryMyUI – Case Study

http://trymyui.com/whitepapers/BLSCaseStudy_TryMYUI.pdf